Chat Gpt Try For Free - Overview

페이지 정보

본문

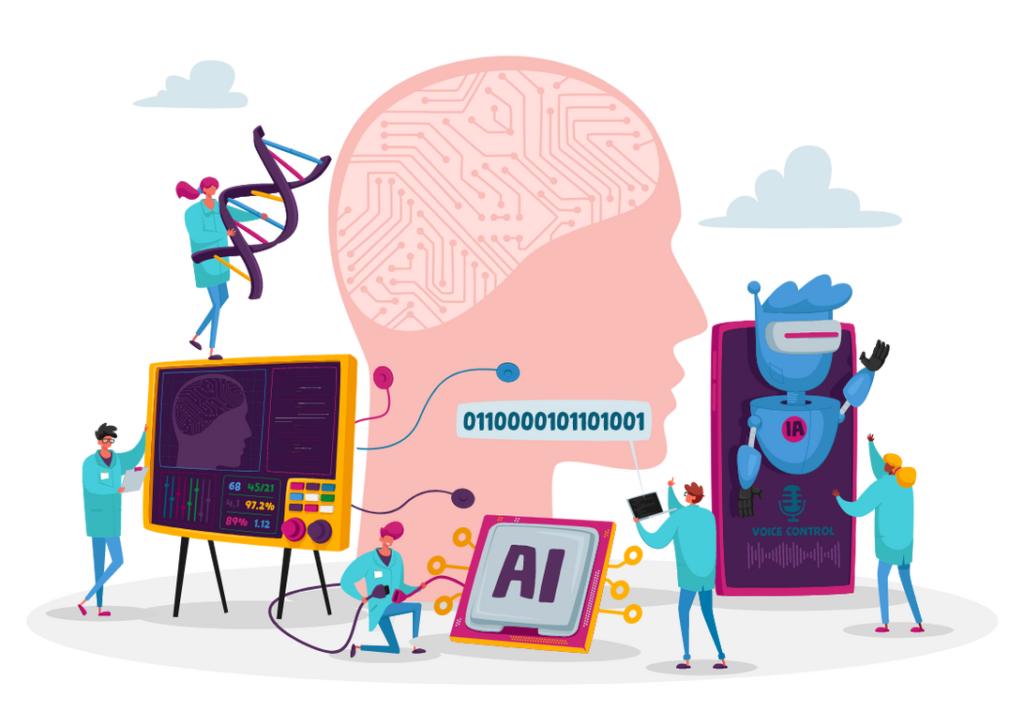

In this text, we’ll delve deep into what a ChatGPT clone is, how it really works, and how you can create your own. In this submit, we’ll explain the fundamentals of how retrieval augmented generation (RAG) improves your LLM’s responses and present you ways to simply deploy your RAG-based mannequin utilizing a modular approach with the open supply building blocks which are part of the brand new Open Platform for Enterprise AI (OPEA). By carefully guiding the LLM with the precise questions and context, you possibly can steer it towards producing more relevant and accurate responses without needing an external information retrieval step. Fast retrieval is a must in RAG for as we speak's AI/ML applications. If not RAG the what can we use? Windows users also can ask Copilot questions similar to they interact with Bing AI chat. I depend on superior machine learning algorithms and a huge amount of information to generate responses to the questions and statements that I obtain. It makes use of solutions (usually either a 'yes' or 'no') to close-ended questions (which can be generated or preset) to compute a remaining metric score. QAG (Question Answer Generation) Score is a scorer that leverages LLMs' excessive reasoning capabilities to reliably evaluate LLM outputs.

LLM analysis metrics are metrics that rating an LLM's output based mostly on standards you care about. As we stand on the sting of this breakthrough, the subsequent chapter in AI is just beginning, and the possibilities are countless. These models are expensive to energy and exhausting to keep up to date, and they like to make shit up. Fortunately, there are quite a few established strategies available for calculating metric scores-some make the most of neural networks, together with embedding models and LLMs, whereas others are primarily based solely on statistical analysis. "The goal was to see if there was any process, any setting, any domain, any anything that language models may very well be helpful for," he writes. If there isn't a want for external data, do not use RAG. If you can handle increased complexity and latency, use RAG. The framework takes care of constructing the queries, running them on your information source and returning them to the frontend, so you may deal with constructing the best possible knowledge experience in your users. G-Eval is a not too long ago developed framework from a paper titled "NLG Evaluation utilizing GPT-four with Better Human Alignment" that uses LLMs to evaluate LLM outputs (aka.

LLM analysis metrics are metrics that rating an LLM's output based mostly on standards you care about. As we stand on the sting of this breakthrough, the subsequent chapter in AI is just beginning, and the possibilities are countless. These models are expensive to energy and exhausting to keep up to date, and they like to make shit up. Fortunately, there are quite a few established strategies available for calculating metric scores-some make the most of neural networks, together with embedding models and LLMs, whereas others are primarily based solely on statistical analysis. "The goal was to see if there was any process, any setting, any domain, any anything that language models may very well be helpful for," he writes. If there isn't a want for external data, do not use RAG. If you can handle increased complexity and latency, use RAG. The framework takes care of constructing the queries, running them on your information source and returning them to the frontend, so you may deal with constructing the best possible knowledge experience in your users. G-Eval is a not too long ago developed framework from a paper titled "NLG Evaluation utilizing GPT-four with Better Human Alignment" that uses LLMs to evaluate LLM outputs (aka.

So ChatGPT o1 is a better coding assistant, my productivity improved rather a lot. Math - ChatGPT uses a large language model, not a calcuator. Fine-tuning involves coaching the large language mannequin (LLM) on a selected dataset related to your process. Data ingestion usually includes sending knowledge to some kind of storage. If the duty includes simple Q&A or a fixed knowledge source, don't use RAG. If sooner response times are preferred, do not use RAG. Our brains developed to be fast slightly than skeptical, notably for choices that we don’t assume are all that vital, which is most of them. I do not suppose I ever had a difficulty with that and to me it looks like simply making it inline with other languages (not a giant deal). This lets you rapidly understand the problem and try chat gpt for free take the necessary steps to resolve it. It's necessary to challenge yourself, but it is equally important to concentrate on your capabilities.

After using any neural community, editorial proofreading is critical. In Therap Javafest 2023, my teammate and i wanted to create video games for kids utilizing p5.js. Microsoft finally announced early variations of Copilot in 2023, which seamlessly work throughout Microsoft 365 apps. These assistants not only play a vital function in work situations but additionally present nice convenience in the learning course of. GPT-4's Role: Simulating natural conversations with college students, offering a extra partaking and real looking studying expertise. GPT-4's Role: Powering a digital volunteer service to supply assistance when human volunteers are unavailable. Latency and computational price are the 2 major challenges while deploying these functions in manufacturing. It assumes that hallucinated outputs will not be reproducible, whereas if an LLM has data of a given idea, sampled responses are more likely to be comparable and contain consistent details. It is a simple sampling-primarily based approach that's used to fact-examine LLM outputs. Know in-depth about LLM analysis metrics on this unique article. It helps structure the info so it's reusable in different contexts (not tied to a particular LLM). The instrument can entry Google Sheets to retrieve knowledge.

If you beloved this article and also you would like to collect more info with regards to chat gpt try for free generously visit our webpage.

- 이전글From All Over The Web Here Are 20 Amazing Infographics About 3 Wheel Strollers 25.01.27

- 다음글ChatGPT 4 Explains Mitch Hedburg and Writes Standup 25.01.27

댓글목록

등록된 댓글이 없습니다.